The transition from a frozen model in a lab to a live tool in a hospital is the most dangerous phase of the AI lifecycle. This is where the “Validation Gap” becomes visible: fewer than 20% of published AI studies undergo rigorous prospective validation.

The Validation Gap

Retrospective testing (looking at past data) is easy. Prospective testing (looking at future data) is hard. Why it matters: In a prospective trial, we can measure operational metrics that don’t exist in a lab, such as:

- Time-to-diagnosis improvements.

- Changes in clinician cognitive load.

- Patient throughput modifications.

Failure Mode: Demographic Bias Models frequently fail when validated on populations underrepresented in the training data. If a model was trained on data from a wealthy urban center, it may perform poorly in a rural community hospital, exacerbating healthcare disparities.

The “Dataset Shift”

All AI systems operate under a fragile assumption: that the future will look like the past. In healthcare, this is rarely true. The “Drift” Phenomenon: Clinical practices evolve. New scanners are purchased. Protocols change.

- The Classic Example: When the US healthcare system switched from ICD-9 to ICD-10 coding, thousands of predictive models broke overnight because the data structure changed fundamentally.

The Assumption Problem: If a hospital changes its CT imaging protocol, the pixel distribution changes. An unmonitored AI model might interpret this change as pathology, leading to performance degradation.

Stage 3: The “Dataset Shift” & The Assumption Problem

All AI systems operate under a fundamental assumption: that the future will look like the past. In healthcare, this assumption is frequently violated. Clinical practices evolve, new treatments emerge, and patient populations shift.

This creates “Dataset Shift.” A model that was validated yesterday may fail today because the environment has changed.

- The Classic Example: When the US healthcare system switched from ICD-9 to ICD-10 coding, thousands of predictive models broke overnight because the data language changed.

- The Imaging Risk: If a hospital changes its scanner protocol, the pixel distribution changes. An unmonitored AI model might interpret this change as pathology.

When these changes occur, previously validated performance metrics become meaningless.

The Solution: Continuous Monitoring Infrastructure

Successful long-term deployment requires a robust monitoring system capable of detecting performance degradation before it impacts patient care. Organizations must establish continuous audit protocols that track:

- Prediction Distributions: Is the model flagging significantly more patients today than last month?

- Input Data Characteristics: Has the image quality or data format drifted?

- Clinical Outcomes: Are the predictions still correlating with ground truth?

Early detection of these shifts allows for rapid corrective action—whether that is model retraining, parameter adjustment, or temporary system deactivation.

Strategic Implication: The “Deployment Success” Factor

Sustainable AI is not about a static model frozen in time; it is about an adaptive system. Clinical deployment marks the beginning, not the end, of AI system management. Organizations must invest in infrastructure that allows for rapid intervention when the healthcare environment inevitably changes.

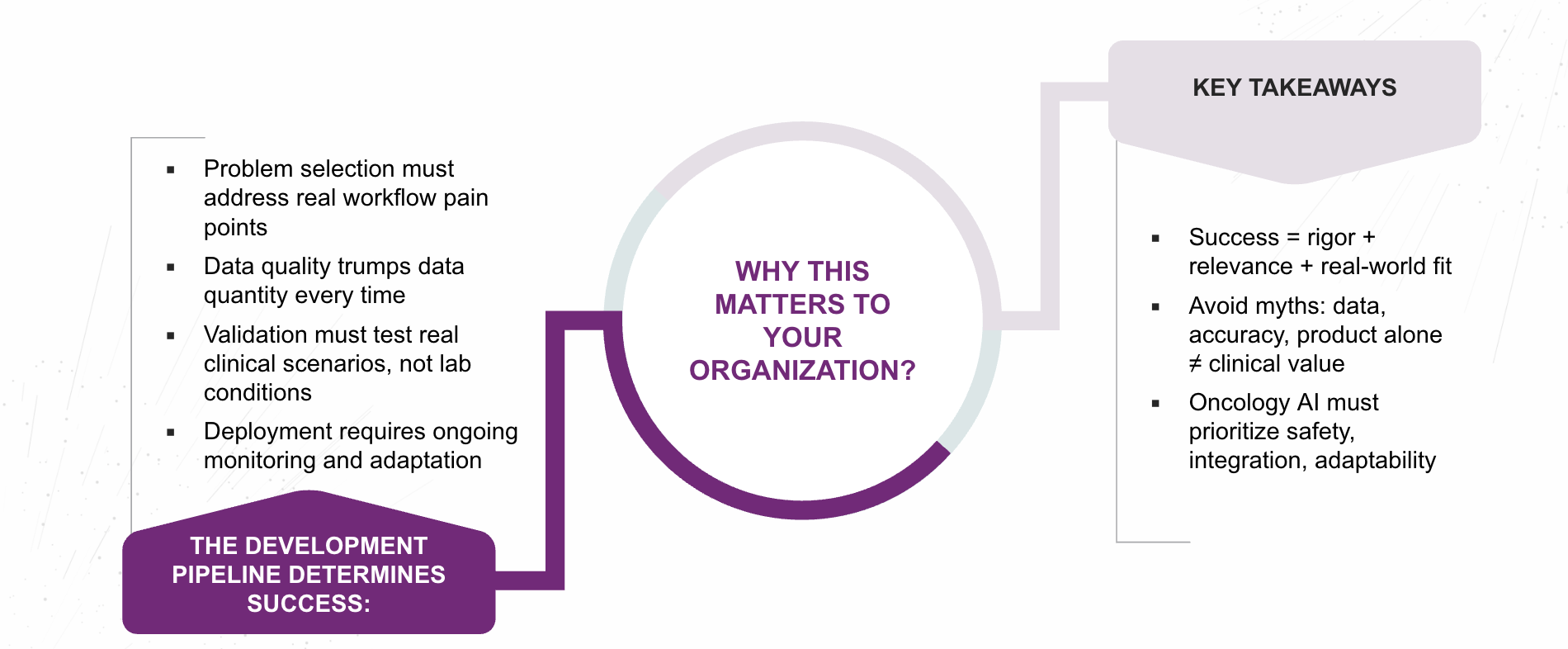

Series Conclusion: Redefining Success in Oncology AI

The promise of AI in oncology does not rest in the novelty of its algorithms, but in the integrity of its development process and the precision of its clinical alignment.

As the industry moves beyond experimental enthusiasm toward evidence-based implementation, success will depend on disciplined model design, transparent validation, and thoughtful integration into real-world workflows. AI systems built without this clinical grounding risk becoming isolated proofs of concept—technically sophisticated, yet operationally irrelevant.

Conversely, solutions that emerge from close collaboration between data scientists, medical physicists, oncologists, and informaticists stand the best chance of achieving measurable clinical value. These partnerships ensure that AI models are not only accurate but actionable, fitting naturally into oncology’s complex ecosystem of imaging, planning, delivery, and follow-up care.

Authored By: Padmasri Bhetanabhotla