Introduction: The Regulatory Imperative

As AI capabilities expand across healthcare, we face a critical tipping point. While the potential for improved patient outcomes is immense, comprehensive regulation has become essential to prevent unintended consequences and misuse.

Healthcare AI regulation is not just about bureaucracy; it targets fundamental concerns that directly impact patient safety and health equity. Specifically, regulators are focused on preventing:

- Bias Perpetuation: Ensuring training data doesn’t encode discrimination that excludes groups from proper care.

- Transparency Failures: Mandating documentation and audits to identify systemic inequities.

- Privacy Overreach: Protecting patient consent against intrusive AI-powered surveillance.

- Unaccountable Decisions: Ensuring diagnostic tools meet strict fairness standards.

- Emerging Threats: Combating deepfakes and medical misinformation.

The Global Regulatory Landscape

Currently, the world is divided into two distinct approaches to AI governance.

The United States: A Fragmented Approach In the U.S., oversight is distributed across multiple agencies. The FDA governs AI as a medical device (SaMD), while the FTC monitors deceptive trade practices. While effective in silos, calls for unified federal oversight are growing to close the gaps between these agencies.

The European Union: A Unified Framework The EU has taken a more centralized route with the EU Artificial Intelligence Act (2021). Building on the foundation of GDPR, this act provides a harmonized framework for “high-risk” AI systems, creating stronger accountability and documentation standards that healthcare organizations operating in Europe must meet.

International Efforts Beyond national borders, organizations like the UN and OECD are promoting nonbinding principles emphasizing transparency and inclusiveness, attempting to create a global consensus on ethical AI.

Healthcare-Specific Challenges

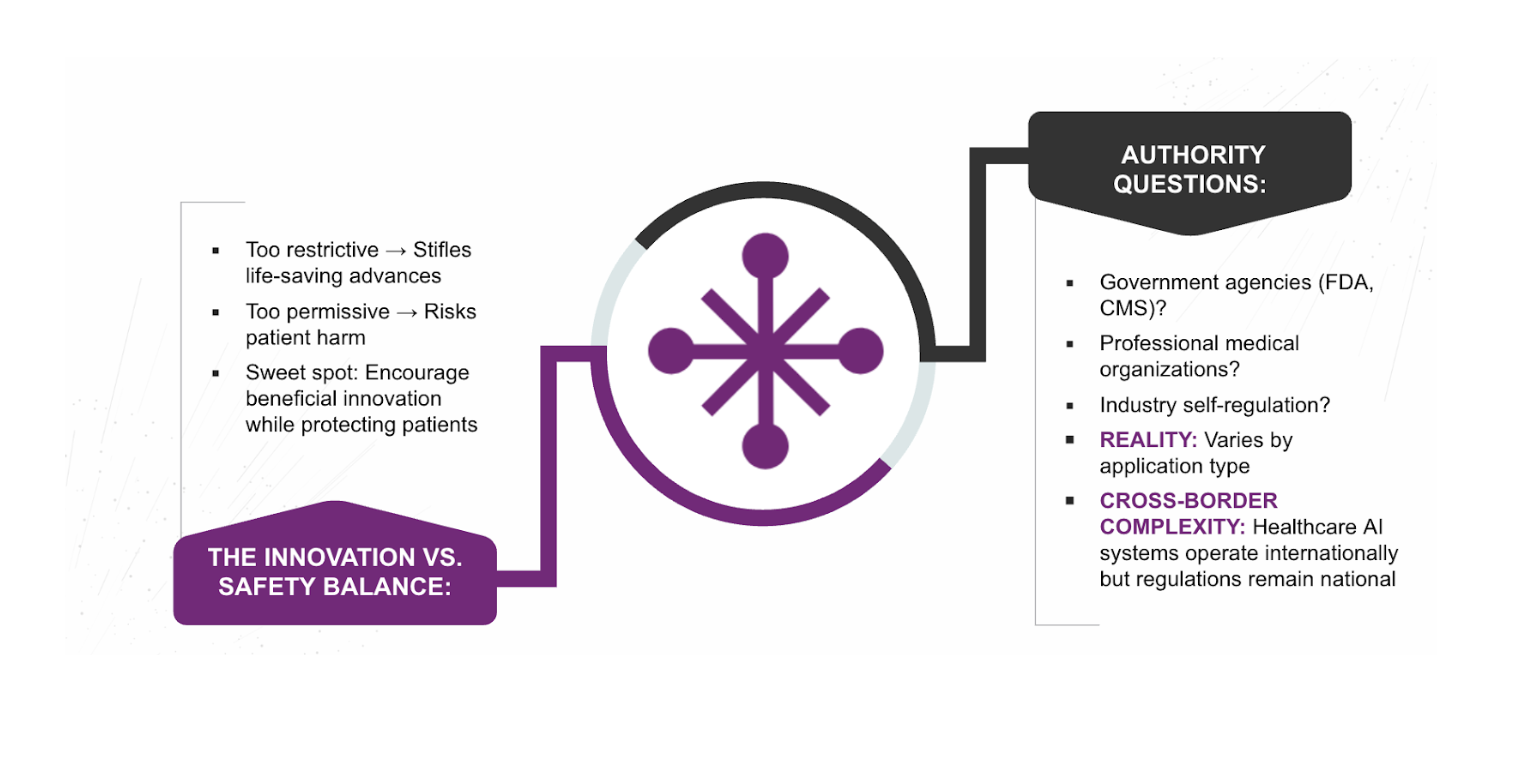

Regulating healthcare AI is uniquely difficult because the stakes are life-and-death. We face three persistent challenges:

- Innovation vs. Safety: This is the central tension. Over-regulation risks stifling life-saving progress, while under-regulation risks patient harm. Finding the “Goldilocks” zone is an ongoing struggle.

- Authority Fragmentation: Responsibility is currently unclear. Who owns the final decision—government agencies, medical boards, or the developers themselves?

- Cross-Border Systems: AI models are often built in one country and deployed in another. This requires a level of global coordination that does not yet exist.

Strategic Implication

Regulation must evolve to safeguard patients without stifling innovation. For healthcare leaders, the goal is not just compliance, but the establishment of standards that build clinician and public trust. Without that trust, even the most innovative tool will fail to see adoption.

Authored By: Padmasri Bhetanabhotla