The Double-Edged Sword

Healthcare AI systems possess unprecedented potential to improve decision-making and outcomes through embodied health data. Yet, that potential carries a heavy ethical responsibility.

Biased AI systems do not just fail; they can perpetuate and amplify existing disparities, actively harming vulnerable populations. To deploy AI safely, we must recognize that bias is not a single “bug” to be fixed—it is a pipeline-wide vulnerability.

The Pipeline-Wide Challenge

Bias infiltrates AI systems at every stage—from the moment data is collected to the final post-deployment monitoring.

Unlike ordinary performance errors, which might be random, bias systematically disadvantages specific groups. This exacerbates inequities that already exist in the healthcare system.

Beyond Technical Excellence: The “Average” Trap

We often assume that a high-performing model is a fair model. This is a dangerous misconception.

Consider a recent chest X-ray triage model that achieved an impressive AUC of 0.859. On the surface, it appeared ready for immediate deployment. However, a deeper bias audit revealed a disturbing reality. The model significantly under-diagnosed specific subpopulations:

- Female patients

- Younger patients

- Medicaid recipients

These are the very populations already underserved by the medical system. Technical excellence without equity creates tools that perform well “on average” yet fail those most in need.

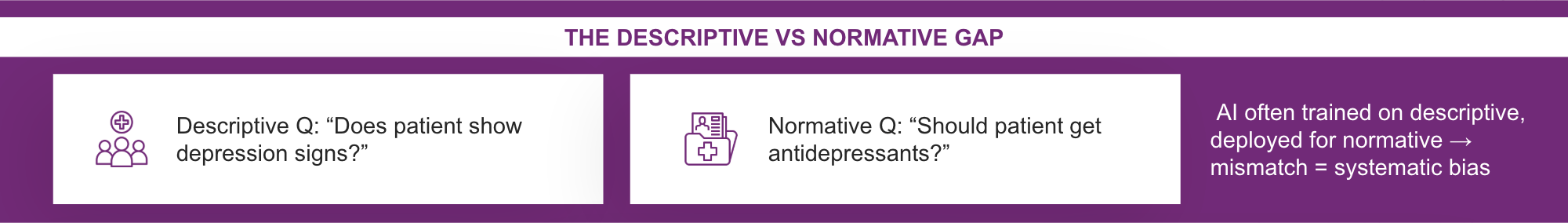

The Language of Bias

This risk extends beyond imaging. Large Language Models (LLMs) used for automated note-completion show similar risks. These systems are trained on historical clinical data, which means they can learn and reproduce the prejudices found in past clinical documentation, generating racially or socio-economically biased recommendations that mirror human predecessors.

The Strategic Imperative: Safety, Not Just Ethics

For healthcare leaders, bias mitigation is not an optional ethics exercise; it is a clinical safety imperative.

Biased systems endanger patients and expose organizations to significant liability. Therefore, comprehensive assessment is required across the entire lifecycle:

- Data Collection: Who is represented in the dataset?

- Outcome Labeling: Are the ground truths accurate for all groups?

- Algorithm Development: Is the model optimizing for the majority at the expense of the minority?

- Post-Deployment: Are we monitoring for performance drift in specific subgroups?

Conclusion

As we move forward in this series, we will examine bias risks at each of these pipeline stages. Achieving equitable AI requires systematic attention to detail. It is the only way to ensure that our innovations lift all patients, rather than leaving some behind.

Authored By: Padmasri Bhetanabhotla